- easy to install

- low on system resources

- easy to customize

I had has the opportunity top work on ELK(Elasticsearch, Logstash and Kibana) Observability stack. It was so popular that i gave no second thoughts to incorporate it into my skill set. Seniors would assign me tickets to spin up VMs and collect start collecting logs. It has been a while that i have used this stack.

This summer i wanted to update my skillset with current tool sets. Somewhere in my mind i had this information that the ELasticsearch has been widely critisized for its new licencing which makes it opensource. It is deffinitley a turn off for me. My companies who are highly dependent on them will have no impact as they cam afford the fee. But some small projects might get impacted. Plus i never enjoyed reading the licening which are not simple.

Terraform too made some changes and have gone closed-source. Since i had to update my tool-set, i did a PoC on Open-tofu and LPG(Loki, Prometheus and Grafana) Obervability stacks. Opentofu is same as Terraform and i find no difference. The LPG stack however was easier to use and resource footprint is quite small as it doen;t use full-text search like Elasticsearch.

Today i found out the Elasticsearch is goping open-source again. It is a welcome move. It deffinetely means they have lost some bussiness. I hope terraform follows along.

We will be using Gorilla-mux to avoid writing a lot of boilerplate. First we refactor, we replace ServeMux

/handlers/products.go

Implementing Middleware functions

type MiddlewareFunc func(http.Handler) http.Handler

For creating objects using REST api, we either use POST or PUT

/GET/ retrieves a representation of the resource at the specified URI. The body of the response message contains the details of the requested resource.

/POST/ creates a new resource at the specified URI. The body of the request message provides the details of the new resource. Note that POST can also be used to trigger operations that don’t actually create resources.

/PUT/ either creates or replaces the resource at the specified URI. The body of the request message specifies the resource to be created or updated.

/PATCH/ performs a partial update of a resource. The request body specifies the set of changes to apply to the resource.

/DELETE/ removes the resource at the specified URI. Source: https://learn.microsoft.com/en-us/azure/architecture/best-practices/api-design

Use POST for creating new resources and use PUT to update resources.

Adding POST method /handlers/products.go

package handlers

import (

"log"

"net/http"

"github.com/dileepkushwaha/server/data"

)

type Products struct {

l *log.Logger

}

func NewProducts(l *log.Logger) *Products {

return &Products{l}

}

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

if r.Method == http.MethodGet {

p.getProducts(rw, r)

return

}

if r.Method == http.MethodPost {

p.addProduct(rw, r)

return

}

//catch all

rw.WriteHeader(http.StatusMethodNotAllowed)

}

func (p *Products) getProducts(rw http.ResponseWriter, r *http.Request) {

lp := data.GetProducts()

err := lp.ToJSON(rw)

if err != nil {

http.Error(rw, "Unable to marshal json", http.StatusInternalServerError)

}

}

func (p *Products) addProduct(rw http.ResponseWriter, r *http.Request) {

p.l.Println("handle POST Product")

}

Trying to curl with POST method using a verbose, -v option

curl -v localhost:8080 -d '{}'| jq

* Trying 127.0.0.1:8080...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0* Connected to localhost (127.0.0.1) port 8080 (#0)

> POST / HTTP/1.1

> Host: localhost:8080

> User-Agent: curl/7.81.0

> Accept: */*

> Content-Length: 2

> Content-Type: application/x-www-form-urlencoded

>

} [2 bytes data]

* Mark bundle as not supporting multiuse

< HTTP/1.1 200 OK

< Date: Thu, 15 Aug 2024 18:36:59 GMT

< Content-Length: 0

<

100 2 0 0 100 2 0 402 --:--:-- --:--:-- --:--:-- 500

* Connection #0 to host localhost left intact

We next need to convert the POST data into product object https://pkg.go.dev/encoding/json#Decoder

/data/products.go

package data

import (

"encoding/json"

"io"

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int `json:"id"`

Name string `json:"name"`

Description string `json:"description"`

Price float32 `json:"price"`

SKU string `json:"sku"`

CreatedOn string `json:"-"`

UpdatedOn string `json:"-"`

DeletedOn string `json:"-"`

}

func (p *Product) FromJSON(r io.Reader) error {

e := json.NewDecoder(r)

return e.Decode(p)

}

// Products is a collection of Product

type Products []*Product

// ToJSON serializes the contents of the collection to JSON

// NewEncoder provides better performance than json.Unmarshal as it does not

// have to buffer the output into an in memory slice of bytes

// this reduces allocations and the overheads of the service

//

// https://golang.org/pkg/encoding/json/#NewEncoder

func (p *Products) ToJSON(w io.Writer) error {

e := json.NewEncoder(w)

return e.Encode(p)

}

// GetProducts returns a list of products

func GetProducts() Products {

return productList

}

// productList is a hard coded list of products for this

// example data source

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

/handlers/products.go

package handlers

import (

"log"

"net/http"

"github.com/dileepkushwaha/server/data"

)

type Products struct {

l *log.Logger

}

func NewProducts(l *log.Logger) *Products {

return &Products{l}

}

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

if r.Method == http.MethodGet {

p.getProducts(rw, r)

return

}

if r.Method == http.MethodPost {

p.addProduct(rw, r)

return

}

//catch all

rw.WriteHeader(http.StatusMethodNotAllowed)

}

func (p *Products) getProducts(rw http.ResponseWriter, r *http.Request) {

lp := data.GetProducts()

err := lp.ToJSON(rw)

if err != nil {

http.Error(rw, "Unable to marshal json", http.StatusInternalServerError)

}

}

func (p *Products) addProduct(rw http.ResponseWriter, r *http.Request) {

p.l.Println("handle POST Product")

prod := &data.Product{}

err := prod.FromJSON(r.Body)

if err != nil {

http.Error(rw, "Unable to Unmarshal json", http.StatusBadRequest)

}

p.l.Printf("Prod: %#v", prod)

}

| On running the server and passing apost request: curl -v localhost:8080 -d ‘{“id”: 3, “name”: “frapuchinno”, “description”: “nice cup of tea”}’ | jq |

We get the following reqauet on the server:

product-api2024/08/16 00:44:57 Prod: &data.Product{ID:3, Name:"frapuchinno", Description:"nice cup of tea", Price:0, SKU:"", CreatedOn:"", UpdatedOn:"", DeletedOn:""}

Next we will store the recieved data

/data/products.go

//add these lines

func AddProduct(p *Product) {

p.ID = getNextID()

productList = append(productList, p)

}

func getNextID() int {

lp := productList[len(productList)-1]

return lp.ID + 1

}

/handlers/products.go

//add these lines

func (p *Products) addProduct(rw http.ResponseWriter, r *http.Request) {

p.l.Println("handle POST Product")

prod := &data.Product{}

err := prod.FromJSON(r.Body)

if err != nil {

http.Error(rw, "Unable to Unmarshal json", http.StatusBadRequest)

}

//p.l.Printf("Prod: %#v", prod)

data.AddProduct(prod)

}

Now when we send json data, The data is recieved on to the server, nect we would like it to be store. We would use PUT for this.

/data/products.go

package data

import (

"encoding/json"

"fmt"

"io"

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int `json:"id"`

Name string `json:"name"`

Description string `json:"description"`

Price float32 `json:"price"`

SKU string `json:"sku"`

CreatedOn string `json:"-"`

UpdatedOn string `json:"-"`

DeletedOn string `json:"-"`

}

func (p *Product) FromJSON(r io.Reader) error {

e := json.NewDecoder(r)

return e.Decode(p)

}

// Products is a collection of Product

type Products []*Product

// ToJSON serializes the contents of the collection to JSON

// NewEncoder provides better performance than json.Unmarshal as it does not

// have to buffer the output into an in memory slice of bytes

// this reduces allocations and the overheads of the service

//

// https://golang.org/pkg/encoding/json/#NewEncoder

func (p *Products) ToJSON(w io.Writer) error {

e := json.NewEncoder(w)

return e.Encode(p)

}

// GetProducts returns a list of products

func GetProducts() Products {

return productList

}

func AddProduct(p *Product) {

p.ID = getNextID()

productList = append(productList, p)

}

func UpdateProduct(id int, p *Product) error {

_, pos, err := findProduct(id)

if err != nil {

return err

}

p.ID = id

productList[pos] = p

return nil

}

var ErrProductNotFound = fmt.Errorf("Product not found")

func findProduct(id int) (*Product, int, error) {

for i, p := range productList {

if p.ID == id {

return p, i, nil

}

}

return nil, -1, ErrProductNotFound

}

func getNextID() int {

lp := productList[len(productList)-1]

return lp.ID + 1

}

// productList is a hard coded list of products for this

// example data source

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

/handlers/products.go

package handlers

import (

"log"

"net/http"

"regexp"

"strconv"

"github.com/dileepkushwaha/server/data"

)

type Products struct {

l *log.Logger

}

func NewProducts(l *log.Logger) *Products {

return &Products{l}

}

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

// handle the request for a list of products

if r.Method == http.MethodGet {

p.getProducts(rw, r)

return

}

if r.Method == http.MethodPost {

p.addProduct(rw, r)

return

}

if r.Method == http.MethodPut {

p.l.Println("PUT", r.URL.Path)

// expect the id in the URI

reg := regexp.MustCompile(`/([0-9]+)`)

g := reg.FindAllStringSubmatch(r.URL.Path, -1)

if len(g) != 1 {

p.l.Println("Invalid URI more than one id")

http.Error(rw, "Invalid URI", http.StatusBadRequest)

return

}

if len(g[0]) != 2 {

p.l.Println("Invalid URI more than one capture group")

http.Error(rw, "Invalid URI", http.StatusBadRequest)

return

}

idString := g[0][1]

id, err := strconv.Atoi(idString)

if err != nil {

p.l.Println("Invalid URI unable to convert to numer", idString)

http.Error(rw, "Invalid URI", http.StatusBadRequest)

return

}

p.updateProducts(id, rw, r)

return

}

// catch all

// if no method is satisfied return an error

rw.WriteHeader(http.StatusMethodNotAllowed)

}

func (p *Products) getProducts(rw http.ResponseWriter, r *http.Request) {

lp := data.GetProducts()

err := lp.ToJSON(rw)

if err != nil {

http.Error(rw, "Unable to marshal json", http.StatusInternalServerError)

}

}

func (p *Products) addProduct(rw http.ResponseWriter, r *http.Request) {

p.l.Println("handle POST Product")

prod := &data.Product{}

err := prod.FromJSON(r.Body)

if err != nil {

http.Error(rw, "Unable to Unmarshal json", http.StatusBadRequest)

}

//p.l.Printf("Prod: %#v", prod)

data.AddProduct(prod)

}

func (p Products) updateProducts(id int, rw http.ResponseWriter, r *http.Request) {

p.l.Println("handle PUT Product")

prod := &data.Product{}

err := prod.FromJSON(r.Body)

if err != nil {

http.Error(rw, "Unable to Unmarshal json", http.StatusBadRequest)

}

//p.l.Printf("Prod: %#v", prod)

err = data.UpdateProduct(id, prod)

if err == data.ErrProductNotFound {

http.Error(rw, "Product not found", http.StatusNotFound)

return

}

if err != nil {

http.Error(rw, "Product not found", http.StatusInternalServerError)

return

}

}

When working with secrets, such as API keys, passwords, or confidential scripts, the last thing you want is to leave traces of these sensitive commands in your shell history. By using this technique, you ensure that these commands don’t get saved in the history file, reducing the risk of accidental exposure.

]]>

http.ListenAndServe function takes two parameters, addr string and handler.

package main

import "net/http"

func main(){

http.ListenAndServe(":8080", nil)

//addr string can be ip address followed by port.

//Just port signifies that every ip address on the server.

}

Running the above code restirns the following:

curl -v http://localhost:8080

* Trying 127.0.0.1:8080...

* Connected to localhost (127.0.0.1) port 8080 (#0)

> GET / HTTP/1.1

> Host: localhost:8080

> User-Agent: curl/7.81.0

> Accept: */*

>

* Mark bundle as not supporting multiuse

< HTTP/1.1 404 Not Found

< Content-Type: text/plain; charset=utf-8

< X-Content-Type-Options: nosniff

< Date: Mon, 12 Aug 2024 15:42:04 GMT

< Content-Length: 19

<

404 page not found

* Connection #0 to host localhost left intact

Since no request is sent, the server returns a 404. The requests are handled by http.HandleFunc() handler in Golang. We can refer the documentation how http.HandleFunc is used. https://pkg.go.dev/net/http#HandleFunc The implementation of DefaultServeMux can be seen at https://go.dev/src/net/http/server.go The updated code is:

package main

import (

"log"

"net/http"

)

func main() {

http.HandleFunc("/", func(http.ResponseWriter, *http.Request) {

log.Println("hello world")

//This HandleFunc is a convinience method, it takes the function

//and creates a http handler from it and adds it to DefaultServeMux

})

http.ListenAndServe(":8080", nil)

//addr string can be ip address followed by port.

//Just port signifies that every ip address on the server.

//HandleFunc is a convinience method which registers a function to a path on the DefaultServeMux.

//DefaultServeMux is http handler, everything related to server in go is http handler.

//Since the second parameter on ListenAndServe handler is nil, it uses DefaultServeMux.

//When we call ListenAndServe, it uses DefaultServeMux as its root handler and

//determines which code block needs to be executed on the type of request.

}

The above code prints hello world on the server terminal.

Adding a new handler goodbye

package main

import (

"log"

"net/http"

)

func main() {

http.HandleFunc("/", func(http.ResponseWriter, *http.Request) {

log.Println("hello world")

})

http.HandleFunc("/goodbye", func(http.ResponseWriter, *http.Request) {

log.Println("goodbye")

})

http.ListenAndServe(":8080", nil)

}

When the above code is run, the respose goodbye is returned when url is hit with /goodbye. However, when anything else is passed onto the url, it return hello world.

Using ResponseWriter and Request interfacs to read and write to our functions. https://pkg.go.dev/net/http#ResponseWriter https://pkg.go.dev/net/http#Request

Making changes to the HandleFunc taking care of “/” url path. func(rw http.ResponseWriter, r *http.Request)

rw (short for ResponseWriter): Represents the http.ResponseWriter interface, which is used to write the HTTP response back to the client.

r (short for Request): Represents a pointer to an http.Request struct, which contains details about the HTTP request made by the client.

Next we use ioutil ro read the request body.

package main

import (

"fmt"

"io/ioutil"

"log"

"net/http"

)

func main() {

http.HandleFunc("/", func(rw http.ResponseWriter, r *http.Request) {

log.Println("hello world")

// Adding response writer and request variables to the http handler.

d, err := ioutil.ReadAll(r.Body)

if err != nil {

rw.WriteHeader(http.StatusBadRequest)

rw.Write([]byte("Bazinga!"))

//same things can be written replacing the above two lines and using the

//http error package.

//http.Error(rw, "Bazinga!", http.StatusBadRequest)

return

}

fmt.Fprintf(rw, "Hello %s", d)

})

http.HandleFunc("/goodbye", func(http.ResponseWriter, *http.Request) {

log.Println("goodbye")

})

http.ListenAndServe(":8080", nil)

//addr string can be ip address followed by port.

//Just port signifies that every ip address on the server.

}

We will be putting the the content of HandleFunc into an independent object. Keeping all the handlers together inside a folder called handler. We create a new file called hello.go. Next we need to create a struct for the handle func. The http.HandleFunc under the hood, convertst he function that we are pasing as parameter, into http.Handler and is registering with the server,

In the documentation, the http.handler is an interface. It has single method. https://pkg.go.dev/net/http#Handler

type Handler interface {

ServeHTTP(ResponseWriter, *Request)

}

So we now need to create a handler , we need to create struct which implements the interface Handler. The hello.go looks like this

package handlers

import (

"fmt"

"io/ioutil"

"log"

"net/http"

)

type Hello struct {

}

//Adding method which satisfies the interface http.Handler, it has no return parameters

func (h*Hello) ServeHTTP(rw http.ResponseWriter, r *hhtp.Request) {

//adding the contents of HandleFunc here.

log.Println("hello world")

// Adding response writer and request variables to the http handler.

d, err := ioutil.ReadAll(r.Body)

if err != nil {

rw.WriteHeader(http.StatusBadRequest)

rw.Write([]byte("Bazinga!"))

//same things can be written replacing the above two lines and using the

//http error package.

//http.Error(rw, "Bazinga!", http.StatusBadRequest)

return

}

fmt.Fprintf(rw, "Hello %s", d)

}

Refactoring hello.go to make it modular. Adding dependency injection Defining a new function called NewHello which returns a Hello handler as a reference

package handlers

import (

"fmt"

"io/ioutil"

"log"

"net/http"

)

type Hello struct {

l *log.Logger

}

func NewHello(l *log.Logger) *Hello {

return &Hello{l}

}

//Adding method which satisfies the interface http.Handler, it has no return parameters

func (h *Hello) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

log.Println("hello world")

// Adding response writer and request variables to the http handler.

d, err := ioutil.ReadAll(r.Body)

if err != nil {

rw.WriteHeader(http.StatusBadRequest)

rw.Write([]byte("Bazinga!"))

//same things can be written replacing the above two lines and using the

//http error package.

//http.Error(rw, "Bazinga!", http.StatusBadRequest)

return

}

fmt.Fprintf(rw, "Hello %s", d)

}

We next add initiaite the logger variable l and the Hello handler. We create another handler called Goodbye similarly.

package main

import (

"log"

"net/http"

"os"

"time"

"github.com/dileepkushwaha/server/handlers"

)

func main() {

l := log.New(os.Stdout, "product-api", log.LstdFlags)

hh := handlers.NewHello(l)

gh := handlers.NewGoodbye(l)

sm := http.NewServeMux()

sm.Handle("/", hh)

sm.Handle("/goodbye", gh)

http.ListenAndServe(":8080", sm)

}

Next we test the various performance attrtribute of the server such as Idle, Read and write timeouts.

package main

import (

"log"

"net/http"

"os"

"time"

"github.com/dileepkushwaha/server/handlers"

)

func main() {

l := log.New(os.Stdout, "product-api", log.LstdFlags)

hh := handlers.NewHello(l)

gh := handlers.NewGoodbye(l)

sm := http.NewServeMux()

sm.Handle("/", hh)

sm.Handle("/goodbye", gh)

s := &http.Server{

Addr: ":8080",

Handler: sm,

IdleTimeout: 120 * time.Second,

ReadTimeout: 1 * time.Second,

WriteTimeout: 1 * time.Second,

}

s.ListenAndServe()

}

We next test graceful shutdowns

package main

import (

"context"

"log"

"net/http"

"os"

"os/signal"

"time"

"github.com/dileepkushwaha/server/handlers"

)

func main() {

l := log.New(os.Stdout, "product-api", log.LstdFlags)

hh := handlers.NewHello(l)

gh := handlers.NewGoodbye(l)

sm := http.NewServeMux()

sm.Handle("/", hh)

sm.Handle("/goodbye", gh)

s := &http.Server{

Addr: ":8080",

Handler: sm,

IdleTimeout: 120 * time.Second,

ReadTimeout: 1 * time.Second,

WriteTimeout: 1 * time.Second,

}

go func() {

err := s.ListenAndServe()

if err != nil {

l.Fatal(err)

}

}()

//ListenAndServe() will start and not block as it is under go routine.

//It also means that it will immidiately shutdown

//We can use os.Signal package for registration of certain signals.

//os.Singal.Notify takes a channel and a signal

sigChan := make(chan os.Signal)

signal.Notify(sigChan, os.Interrupt)

signal.Notify(sigChan, os.Kill)

sig := <-sigChan

l.Println("Recieved Terminate Gracefull Shutdown", sig)

//Shutdown(ctx context.Context) error

tc, _ := context.WithTimeout(context.Background(), 30*time.Second)

s.Shutdown(tc)

}

We now get a message While stopping he server with Ctrl+C.

go run main.go

product-api2024/08/15 01:27:59 hello world

^Cproduct-api2024/08/15 01:28:26 Recieved Terminate Gracefull Shutdown interrupt

product-api2024/08/15 01:28:26 http: Server closed

Writing REST Apis REST: (Representational State Transfer) It as an architectuctural approach for designing web services proposed by Roy Fielding in 2000. Also known as json over http.

We will make apis for an ecommerce coffee shop. We create a data directory and a file called products.go

package data

import (

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int `json:"id"`

Name string `json:"name"`

Description string `json:"description"`

Price float32 `json:"price"`

SKU string `json:"sku"`

CreatedOn string `json:"-"`

UpdatedOn string `json:"-"`

DeletedOn string `json:"-"`

}

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

We crate a new handler, products.go

package handlers

type Products struct {

l *log.Logger

}

func NewProducts(l *log.Logger) *Products {

return &Products{l}

}

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

//

}

/data/products.go

package data

import (

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int

Name string

Description string

Price float32

SKU string

CreatedOn string

UpdatedOn string

DeletedOn string

}

// GetProduct functions returns list of products.

func GetProducts() []Product{

return productList

}

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

We use json encoding. /handlers/products.go

package handlers

import (

"encoding/json"

"log"

"net/http"

"github.com/dileepkushwaha/server/data"

)

type Products struct {

l *log.Logger

}

func NewProducts(l *log.Logger) *Products {

return &Products{l}

}

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

lp := data.GetProducts()

d, err := json.Marshal(lp)

if err != nil {

http.Error(rw, "Unable to marshal json", http.StatusInternalServerError)

}

rw.Write(d)

}

While running curl command the following output is obtained.

curl localhost:8080 | jq

[

{

"ID": 1,

"Name": "Latte",

"Description": "Frothy milky coffee",

"Price": 2.45,

"SKU": "abc323",

"CreatedOn": "2024-08-15 10:55:12.207505324 +0000 UTC",

"UpdatedOn": "2024-08-15 10:55:12.207508393 +0000 UTC",

"DeletedOn": ""

},

{

"ID": 2,

"Name": "Espresso",

"Description": "Short and strong coffee without milk",

"Price": 1.99,

"SKU": "fjd34",

"CreatedOn": "2024-08-15 10:55:12.20750907 +0000 UTC",

"UpdatedOn": "2024-08-15 10:55:12.207509536 +0000 UTC",

"DeletedOn": ""

}

]

We will be suing json annotations inorder to display data according to our needs. /data/products.go

package data

import (

"encoding/json"

"io"

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int `json:"id"`

Name string `json:"name"`

Description string `json:"description"`

Price float32 `json:"price"`

SKU string `json:"sku"`

CreatedOn string `json:"-"`

UpdatedOn string `json:"-"`

DeletedOn string `json:"-"`

}

// GetProducts returns a list of products

func GetProducts() Products {

return productList

}

// productList is a hard coded list of products for this

// example data source

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

Now we get the output like we wanted to.

$curl localhost:8080 | jq

[

{

"id": 1,

"name": "Latte",

"description": "Frothy milky coffee",

"price": 2.45,

"sku": "abc323"

},

{

"id": 2,

"name": "Espresso",

"description": "Short and strong coffee without milk",

"price": 1.99,

"sku": "fjd34"

}

]

The Func NewEcoder instead of returning a slice of data and an error, it writes the output to the io.writer,

func NewEncoder(w io.Writer) *Encoder

Using Encoder, we dont have to allocated buffer in the memory. Having large json object can slow things down using Marshalling technique. Encoders are relatively faster.

package data

import (

"encoding/json"

"io"

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int `json:"id"`

Name string `json:"name"`

Description string `json:"description"`

Price float32 `json:"price"`

SKU string `json:"sku"`

CreatedOn string `json:"-"`

UpdatedOn string `json:"-"`

DeletedOn string `json:"-"`

}

// Products is a collection of Product

type Products []*Product

// ToJSON serializes the contents of the collection to JSON

// NewEncoder provides better performance than json.Unmarshal as it does not

// have to buffer the output into an in memory slice of bytes

// this reduces allocations and the overheads of the service

//

// https://golang.org/pkg/encoding/json/#NewEncoder

func (p *Products) ToJSON(w io.Writer) error {

e := json.NewEncoder(w)

return e.Encode(p)

}

// GetProducts returns a list of products

func GetProducts() [ ]*Products {

return productList

}

// productList is a hard coded list of products for this

// example data source

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

Refactoring /handlers.products.go

package handlers

import (

"log"

"net/http"

"github.com/dileepkushwaha/server/data"

)

type Products struct {

l *log.Logger

}

func NewProducts(l *log.Logger) *Products {

return &Products{l}

}

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

lp := data.GetProducts()

err := lp.ToJSON(rw)

if err != nil {

http.Error(rw, "Unable to marshal json", http.StatusInternalServerError)

}

}

/data/products.go

package data

import (

"encoding/json"

"io"

"time"

)

// Product defines the structure for an API product

type Product struct {

ID int `json:"id"`

Name string `json:"name"`

Description string `json:"description"`

Price float32 `json:"price"`

SKU string `json:"sku"`

CreatedOn string `json:"-"`

UpdatedOn string `json:"-"`

DeletedOn string `json:"-"`

}

// Products is a collection of Product

type Products []*Product

// ToJSON serializes the contents of the collection to JSON

// NewEncoder provides better performance than json.Unmarshal as it does not

// have to buffer the output into an in memory slice of bytes

// this reduces allocations and the overheads of the service

//

// https://golang.org/pkg/encoding/json/#NewEncoder

func (p *Products) ToJSON(w io.Writer) error {

e := json.NewEncoder(w)

return e.Encode(p)

}

// GetProducts returns a list of products

func GetProducts() Products {

return productList

}

// productList is a hard coded list of products for this

// example data source

var productList = []*Product{

&Product{

ID: 1,

Name: "Latte",

Description: "Frothy milky coffee",

Price: 2.45,

SKU: "abc323",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

&Product{

ID: 2,

Name: "Espresso",

Description: "Short and strong coffee without milk",

Price: 1.99,

SKU: "fjd34",

CreatedOn: time.Now().UTC().String(),

UpdatedOn: time.Now().UTC().String(),

},

}

How do we read json? Implement Get and Post requests:

Refactoring adding getpProduct internal function

func (p *Products) getProducts(rw http.ResponseWriter, h *http.Request) {

lp := data.GetProducts()

err := lp.ToJSON(rw)

if err != nil {

http.Error(rw, "Unable to marshal json", http.StatusInternalServerError)

}

}

Uptill Golang 1.19, Go standard library doesn’t have provision for various REST verbs management. People optede to use frameworks like Gin and GorillaMux.

Implementing REST verb management in vanilla golang

/handlers/products.go

func (p *Products) ServeHTTP(rw http.ResponseWriter, r *http.Request) {

if r.Method == http.MethodGet {

p.getProducts(rw, r)

return

}

//catch all

rw.WriteHeader(http.StatusMethodNotAllowed)

}

When Get method is curled, reponse is same. WWhen a Delete method is used, Error messege is recieved.

curl localhost:8080 -XDELETE -v |jq

* Trying 127.0.0.1:8080...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0* Connected to localhost (127.0.0.1) port 8080 (#0)

> DELETE / HTTP/1.1

> Host: localhost:8080

> User-Agent: curl/7.81.0

> Accept: */*

>

* Mark bundle as not supporting multiuse

< HTTP/1.1 405 Method Not Allowed

< Date: Thu, 15 Aug 2024 13:17:41 GMT

< Content-Length: 0

<

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0

* Connection #0 to host localhost left intact

Deploying applications in Kubernetes is challenging. A simple application like wordpress would need atleast 6 different files comprising deployment.yaml, services.yaml, pv.yaml, pvc.yaml secret.yaml. Managing them throught the lifecycle of the application is confusing. Even if all definition are put into one file, the problems would be while chaging the configuration and the values.

Helm helps you manage Kubernetes applications — Helm Charts help you define, install, and upgrade even the most complex Kubernetes application.

Charts are easy to create, version, share, and publish — so start using Helm and stop the copy-and-paste.

~ helm.sh

Helm is like meme template, you create once, reuse and distribute several time.

curl https://baltocdn.com/helm/signing.asc | gpg --dearmor | sudo tee /usr/share/keyrings/helm.gpg > /dev/null

sudo apt-get install apt-transport-https --yes

echo "deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/helm.gpg] https://baltocdn.com/helm/stable/debian/ all main" | sudo tee /etc/apt/sources.list.d/helm-stable-debian.list

sudo apt-get update

sudo apt-get install helm

# check helm version installation

helm version

# Search for a helm chart on [artifact hub](https://artifacthub.io/)

helm search hub wordpress

# Add a helm repository

helm add repo brigade https://brigadecore.github.io/charts

# Search in an added helm repository

helm search repo brigade

# List added repos

helm repo list

# Update an added repo

helm repo update

# Install a helm chart

helm install my-wordpress bitnami/wordpress

# check status of chart installation

helm status my-wordpress

# Uninstall a chart

helm uninstall my-wordpress

By default the helm chart is installed in the .cache directory of root

.cache/helm/repository

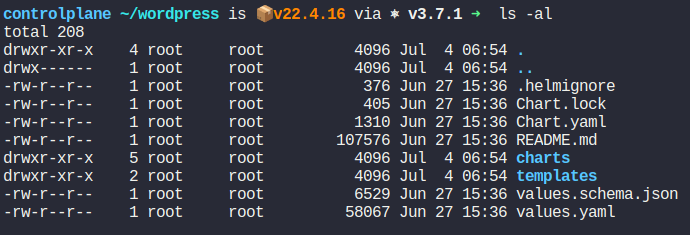

The helm chart is downloaded in .tar format. All helm charts have the following structure.

The README.md file as the name suggests include the instruction the author wants the user to follow. Thee are displayed on the hub as well.

Chart.yaml consists of metadata about the Helm chart, such as its name, version, and description.

Values.yaml contains the default values for the chart. These can be modified during installaion or upgradation

# Can then override any of these settings in a YAML formatted file, and then pass that file during installation

helm show values bitnami/wordpress

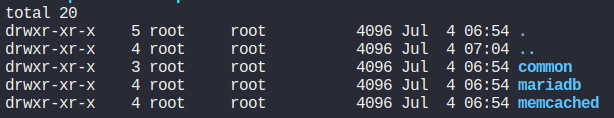

The chart directory has the following structure.

This directory is used to store dependent charts. If your chart depends on other charts, they are placed in this directory. It is also used for managing subcharts in a multi-chart setup.

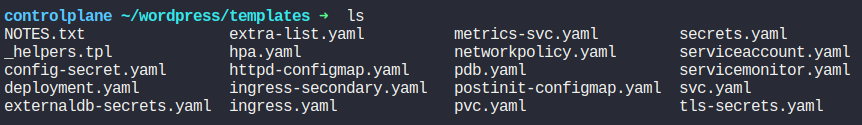

The templates directory contains the Kubernetes manifest templates. Helm uses these templates to generate the final YAML files that are applied to the Kubernetes cluster. Templates can use Go templating to include dynamic values.

#### Some common files and their usage in templates/

In Helm, there are several ways to pass values during the installation or upgrade of a chart. These methods allow you to customize the deployment without modifying the chart’s templates directly. Here are the primary ways to pass values in Helm:

replicaCount: 3

image:

repository: myimage

tag: latest

pullPolicy: IfNotPresent

service:

type: ClusterIP

port: 80

helm install myrelease mychart -f custom-values.yaml

replicaCount: 2

image:

tag: stable

4. Using Environment Variables

You can pass environment variables into Helm values by using the --set flag with environment variables. This is particularly useful in CI/CD environments.

```bash

helm install myrelease mychart --set image.tag=$IMAGE_TAG

helm install myrelease mychart -f values.yaml -f custom-values.yaml --set image.tag=stable

helm install myrelease mychart -f https://example.com/config/custom-values.yaml

The methods are listed from the highest to the lowest precedence:

The helm chart lifecycle can be denoted by these commands used.

#Create:

helm create

#Package:

helm package

#Upload:

helm repo index

#Install:

helm install

#Upgrade:

helm upgrade

#List:

helm list

#Rollback:

helm rollback

#Uninstall:

helm uninstall

#First, use the Helm CLI to create a new chart. This command generates a boilerplate structure for your chart.

helm create mychart

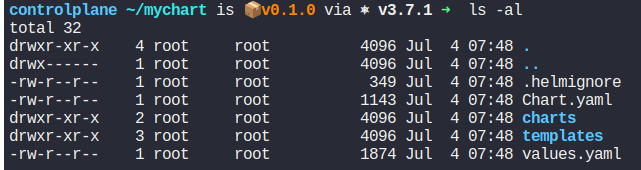

This creates a directory called mychart with the following structure:

We will edit this boilerplate code.

We will edit this boilerplate code.

# There are some major differences between helm version 2 and 3

# apiVersion v2 indicate helm 3 charts. Missing apiVersion indicate helm 2 charts

apiVersion: v2

name: mychart

description: A Helm chart for Kubernetes

type: application

version: 0.1.0

appVersion: "1.16.0"

### Its a good practice to add maintainer section with email

maintainers:

- email: johnsmith@matrix.com

name: john smith

We will be removing all the files from this directory and be adding our own k8s resource files.

rm -rf templates/*

Add the deploiyment.yaml, service,yaml, value.yaml and any other yaml file required by the application. Make sure the values can be modified by changing them in vlaues.yaml using the Template directive syntax or Go Template language.

helm install mychart ./mychart

Kubernetes YAML files can quickly become overwhelming due to the complexity and volume of configurations required for even simple applications. Helm simplifies this process by providing a standardized way to package, configure, and manage Kubernetes applications. With Helm, you can create reusable charts, easily upgrade and roll back deployments, and manage complex configurations efficiently.

By following the steps and examples provided in this blog, you can get started with Helm, create your own Helm charts, and streamline your Kubernetes deployments. Helm not only saves time but also reduces errors and improves consistency across your deployments.

Always use helm lint to validate before deploying.

]]>

I purchased a Made in India RISC-V development board from CDAC a few weeks ago for Rs 999 ($12 USD). It is basically an Adruino Uno killer. Ran a hello world. A few problems were encountered which i things is a OS related issue. I am using Ubuntu 22.04.

The first issue was a permission one. The ttyUSB0 didn;t had teh adequet permission.

$sudo su

$cd /

$cd dev

$chown username ttyUSB0

The second issue was that the code after verification couldn’t be uploaded to the microcontroller. If we click the upload icon on the arduino IDE, it returns permission error. To push the code, one has to manually upload the code using a processor through dropdown menu.

Product description